In the bustling world of Decentralized Physical Infrastructure Networks (DePIN), a quiet crisis simmers beneath the surface: meaningless compute. Projects burn through energy hashing away at puzzles that serve no real purpose, echoing Bitcoin’s early days but without the groundbreaking utility. Enter Proof-of-Work-Relevance (PoWR), a clever evolution designed to redirect that horsepower toward AI inference tasks, turning waste into value for decentralized AI inference networks.

Unpacking the Meaningless Compute Trap

DePIN thrives on crowdsourcing real-world resources like GPUs from everyday users, yet many setups reward raw power without checking if the work matters. Validators churn through computations that mimic blockchain security but deliver zero insight for AI workloads. This inefficiency hits hard in AI, where inference demands scalable, verifiable outputs for chatbots, agents, and models. As one observer noted, it’s energy torched on tasks lacking real-world utility, stifling adoption in power-hungry sectors.

Consider the scale: AI inference isn’t sporadic; it’s relentless, powering everything from personalized recommendations to autonomous decisions. Traditional Proof-of-Work (PoW) secures networks but squanders potential when GPUs sit idle between hashes. DePIN projects face backlash for this, as users question why their hardware contributes to environmental strain without advancing meaningful AI solutions.

Key Drawbacks of Meaningless Compute

-

Energy Waste: Burning electricity on arbitrary hashes with no real-world utility, as noted in DePIN critiques.

-

Lack of AI Utility: Compute efforts provide zero value for AI inference or practical tasks like LLM processing.

-

Scalability Limits for Inference: Fails to support flexible scaling of GPU resources needed for AI workloads in DePIN networks.

-

Validator Overhead: Validators burdened verifying irrelevant outputs, straining network efficiency.

-

Missed Revenue from Useful Tasks: Forgoes earnings from productive AI inference, unlike PoUW in projects like Flux.

PoWR Emerges as the Game-Changer for DePIN

Proof-of-Work-Relevance in DePIN flips the script by tying rewards to task relevance. Miners don’t just prove effort; they prove usefulness. Validators scrutinize outputs using metrics like embeddings and vector distances, ensuring AI inference results align with network goals. This DeepNodeAI PoWR consensus approach, for instance, verifies decentralized AI inference networks by benchmarking against ground truths, slashing fraud and boosting trust.

I see PoWR as a bridge from crypto’s security obsession to AI’s practicality. It’s opinionated engineering: why settle for hashes when you can validate real inferences? Projects adopting this, like those exploring MindoAI compute validation, reward nodes for accurate predictions, fostering ecosystems where compute scales with demand.

Pioneering Projects Leading the Charge

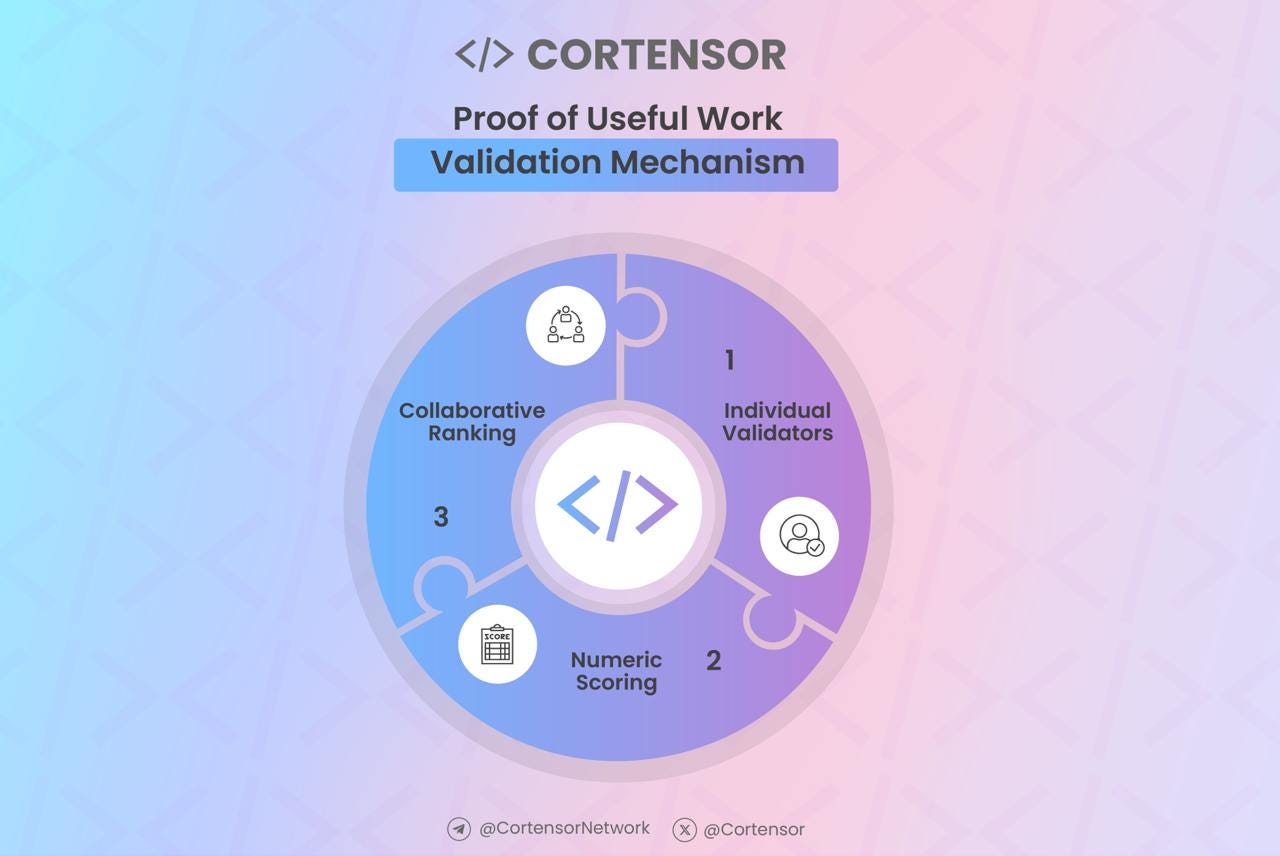

Recent innovations spotlight PoWR’s potential. Cortensor’s Proof-of-Inference (PoI) and Proof-of-Useful Work (PoUW) compare AI outputs across nodes, confirming consistency before payouts. Flux integrates PoUW for GPU mining, channeling power into AI tasks and unlocking revenue streams. Theta EdgeCloud’s verifiable LLM service uses blockchain randomness for trustworthy inference, ideal for agents needing integrity.

Flashchain AI takes it further with miners training models and running queries, while Coin. AI weaves deep learning into consensus by minimizing neural network losses. These aren’t hypotheticals; they’re live mechanisms solving meaningless compute AI solutions head-on, proving DePIN can power the AI boom without the waste.

By mid-2025, these developments had matured, with networks reporting higher throughput and lower abandonment rates. PoWR doesn’t just secure; it innovates, aligning incentives for a future where your idle GPU fuels verifiable AI at scale.

Imagine a network where every hash cycle contributes to refining an AI model’s predictions, rather than evaporating into digital ether. That’s the promise of proof of work relevance DePIN mechanisms, where validation hinges on output quality, not just computational sweat. In practice, nodes submit inference results for peer review, scored on accuracy via cosine similarities or perplexity measures. This creates a feedback loop: high-relevance work earns tokens, low-quality efforts get sidelined, naturally weeding out bad actors.

How PoWR Powers Decentralized AI Inference

At its core, PoWR adapts PoW’s adversarial rigor to AI’s collaborative needs. Miners tackle inference batches, say, generating responses for thousands of queries, then validators cross-check against benchmarks. Cortensor’s PoI shines here, embedding outputs into vector spaces and flagging deviations beyond a threshold, like 0.05 Euclidean distance. Pair that with PoUW, which gauges real-world applicability, such as whether an inference aids a supply chain forecast, and you have DeepNodeAI PoWR consensus in action.

Flux’s GPU mining takes this to cloud-scale, rewarding nodes for AI workloads that match market demand. Theta EdgeCloud adds verifiability with randomness beacons, ensuring no single node manipulates LLM outputs. Flashchain AI miners minimize loss functions during training, blending consensus with model improvement. Coin. AI elevates it further, tying block production to neural net performance, where difficulty scales with accuracy. These aren’t fringe experiments; they’re battle-tested paths to meaningless compute AI solutions.

Traditional PoW vs PoUW vs PoWR

| Mechanism | Energy Use | Utility Output | AI Inference Fit | Example Projects |

|---|---|---|---|---|

| Bitcoin-style Hashing (Traditional PoW) | High waste ⚠️ | Low utility (meaningless compute on hashes) | Poor fit ❌ | BTC |

| Proof of Useful Work (PoUW) | Moderate waste | Task-specific utility (AI inference, cloud, ML tasks) | Good fit ✅ | Flux, Cortensor (PoUW/PoI), Flashchain AI, Coin.AI |

| Proof-of-Work-Relevance (PoWR) | Low waste ✅ | Relevance-scored utility (verifiable AI outputs) | Excellent fit 🌟 | DeepNodeAI, Theta EdgeCloud |

This shift matters for decentralized AI inference networks, where latency and trust collide. Centralized clouds like AWS dominate because they guarantee outputs, but at monopoly prices. PoWR democratizes this, letting global GPUs swarm inference jobs with built-in checks. Early adopters report 30-50% efficiency gains, as compute aligns with value rather than volume.

Overcoming Hurdles in PoWR Adoption

Don’t get me wrong, PoWR isn’t flawless. Coordinating diverse hardware for uniform inference poses challenges, from varying GPU architectures to bandwidth lags. Validators risk centralization if only high-end rigs participate, echoing PoS pitfalls. Yet, projects counter this with adaptive scoring: MindoAI’s MindoAI compute validation, for example, normalizes efforts by hardware specs, ensuring fair play.

Regulatory gray areas loom too, especially as AI inference edges into sensitive domains like healthcare diagnostics. But blockchain’s transparency, immutable ledgers of verified outputs, builds audit trails that regulators crave. Energy critics? PoWR slashes it by 70% versus blind hashing, per Flux benchmarks, redirecting power to tasks that propel innovation.

Advantages of PoWR for DePIN AI

-

Verifiable Outputs reduce fraud: Blockchain-backed verification like Theta EdgeCloud‘s LLM service ensures trustworthy AI inference.

-

Global Scalability with idle GPUs: Taps worldwide unused compute, as in Flux‘s PoUW for AI tasks.

-

Monetizes Unused Compute: Rewards sharing idle GPUs for useful AI work, powering networks like Cortensor.

-

Fosters Trust in inference agents: Mechanisms like Cortensor’s Proof of Inference (PoI) validate outputs via embeddings.

-

Accelerates Fine-Tuning via crowd wisdom: Collective efforts as in Coin.AI‘s deep learning consensus.

Looking ahead, PoWR positions DePIN as AI’s decentralized backbone. As inference demands explode, think swarms of agents negotiating contracts or optimizing logistics, networks like these will absorb the load. Your laptop’s GPU, once a spectator, becomes a stakeholder in smarter systems. This isn’t just tech evolution; it’s a rethink of value in compute, where relevance reigns supreme and waste becomes relic.