In the high-stakes arena of AI inference in 2026, where every GPU hour counts toward scalable deployments, Render Network and io. net stand out as leading DePIN GPU networks. With Render Network's RNDR token trading at $1.41, down a modest 0.007% over the last 24 hours between a high of $1.42 and low of $1.36, the network's stability underscores its maturity. Both platforms promise massive savings over centralized behemoths like AWS, where H100 GPUs command $4.50-$5.50 per hour on-demand. Decentralized alternatives deliver 60-80% discounts, but which one truly edges out for cost-efficient AI inference? Let's dissect their architectures, pricing, and real-world performance.

Render Network's Expansion Powers Reliable AI Compute

Render Network began as a 3D rendering powerhouse but pivoted aggressively into AI compute via its Dispersed. com platform, launched in December 2025. This move aggregates over 5,600 node operators running enterprise-grade NVIDIA H200s and AMD MI300X GPUs, clocking 85-95% utilization rates. The network has rendered over 65 million frames, proving it handles production-scale workloads for AI studios and robotics firms. For AI inference, Render's pricing shines: H100 GPUs range from $1.20-$1.80 per hour, a fraction of AWS rates. This data-driven efficiency stems from token incentives that keep nodes humming, even during peak demand. Render's track record, processing millions of distributed GPU jobs and once surpassing $2 billion market cap, positions it as the steady choice for teams needing proven throughput in decentralized AI compute costs.

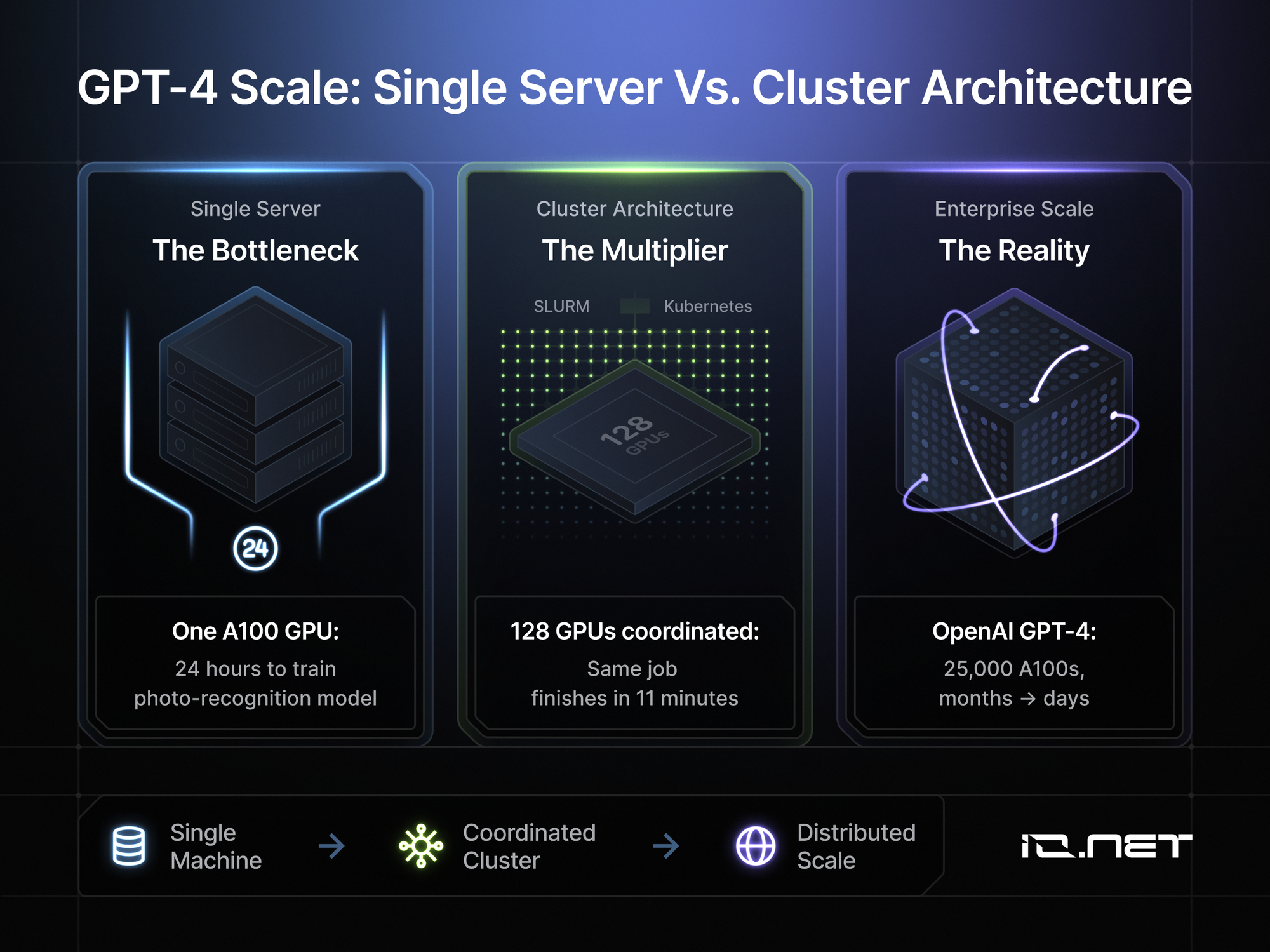

io. net's Focus Delivers Rapid, Flexible GPU Access

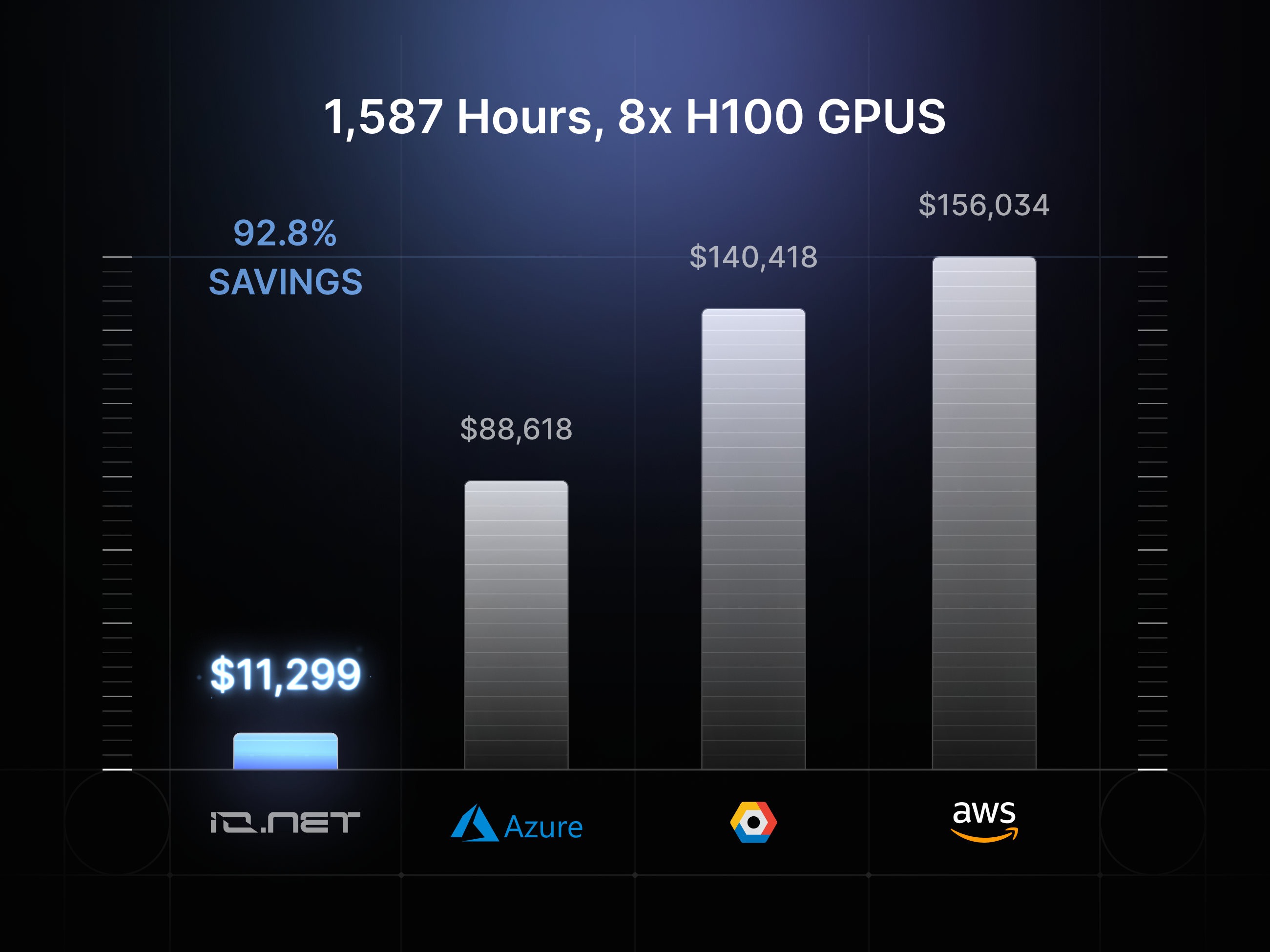

io. net zeros in on AI and machine learning, slicing compute costs by up to 90% versus traditional clouds through a marketplace of underutilized GPUs from miners, data centers, and edge providers. Supporting RTX 4090s, A100s, and H100s, it enables cluster spins in minutes, ideal for dynamic inference needs like real-time model serving. Sources highlight io. net as offering some of the cheapest GPU servers for AI training and inference in 2026, leveraging idle resources for unmatched liquidity. While exact per-hour rates aren't quoted publicly, the platform's model consistently undercuts competitors, aligning with broader DePIN trends of 50-80% inference savings. For startups migrating models, io. net's architecture simplifies scaling without vendor lock-in, making it a nimble contender in io. net AI inference.

Direct Pricing Showdown for H100 Inference Workloads

Pinpointing the cheaper option requires stacking real metrics. Render's H100s at $1.20-$1.80/hour benefit from high utilization and a mature node base, yielding predictable costs for sustained inference runs. io. net, though specifics are aggregate-focused, matches or beats this via its vast pool, with claims of superior savings on consumer-grade like 4090s for lighter inference. Cross-referencing benchmarks, decentralized providers like these outpace AWS by 60-80%, but Render's transparency gives it a quantifiable lead for enterprise H100 needs. VanEck's projections peg crypto AI revenues at $10.2 billion base case by 2030, fueled by such networks, yet near-term, Render's 5,600 active GPUs signal deeper liquidity. For a forward look:

Render Network (RNDR) Price Prediction 2027-2032

Forecasts based on DePIN growth, AI compute adoption, cost advantages over centralized providers, and competition with io.net

| Year | Minimum Price | Average Price | Maximum Price | YoY % Change (Avg) |

|---|---|---|---|---|

| 2027 | $1.00 | $3.00 | $6.00 | +113% |

| 2028 | $1.50 | $5.00 | $12.00 | +67% |

| 2029 | $2.50 | $8.00 | $20.00 | +60% |

| 2030 | $4.00 | $12.00 | $30.00 | +50% |

| 2031 | $6.00 | $18.00 | $45.00 | +50% |

| 2032 | $9.00 | $25.00 | $60.00 | +39% |

Price Prediction Summary

RNDR is forecasted to experience significant growth from its 2026 price of $1.41, driven by AI inference demand and DePIN expansion. Minimum prices reflect bearish scenarios like regulatory hurdles or competition; averages assume steady adoption; maxima capture bullish market cycles and tech breakthroughs, potentially reaching $60 by 2032.

Key Factors Affecting Render Network Price

- Explosive AI compute demand with 60-90% cost savings vs. AWS and centralized clouds

- Render's expansion to AI via Dispersed.com, supporting H100/H200 GPUs with 85-95% utilization

- Proven network scale (5,600+ nodes, 65M+ frames rendered)

- Competition from io.net and others, pressuring pricing but expanding market

- Crypto market cycles, with potential bull runs post-2026 correction

- Regulatory clarity on DePINs and favorable blockchain-AI integrations

- VanEck's $10B AI crypto revenue projection by 2030 boosting sector multiples

Disclaimer: Cryptocurrency price predictions are speculative and based on current market analysis. Actual prices may vary significantly due to market volatility, regulatory changes, and other factors. Always do your own research before making investment decisions.

io. net counters with deployment speed under 2 minutes in similar marketplaces, potentially tipping scales for bursty inference. Binance comparisons note Render's edge in creative rendering bleeding into AI, while io. net democratizes raw power. In Render Network GPU compute versus io. net, the former's network depth may stabilize long-term pricing, but io. net's agility could capture volatile 2026 demands. DePIN GPU networks are reshaping crypto AI infrastructure 2026, forcing users to weigh maturity against flexibility.

Token incentives further differentiate the two. Render's RNDR, steady at $1.41, rewards node operators for consistent uptime, fostering that impressive 85-95% utilization. io. net's IO token, though not priced here, drives similar participation by tapping idle miner GPUs, potentially flooding the market with supply for rock-bottom rates on inference tasks.

Head-to-Head Metrics: Pricing, Speed, and Scale 🚀

| Feature 🚀 | Render Network 💎 | io.net ⚡ |

|---|---|---|

| H100 Price/Hour 💰 | $1.20-$1.80 (transparent for steady LLM inference; 60-80% savings vs AWS) | Up to 90% savings off cloud (burst peaks) |

| Node Count 🌐 | 5,600+ nodes (proven scale) | Vast idle miner pool |

| Utilization 📊 | 85-95% | High via miners |

| Deployment Time ⏱️ | Proven enterprise reliability | <2 min clusters |

| Focus 🎯 | Rendering + AI inference | AI/ML workloads only |

These metrics spotlight Render's maturity for enterprise inference, where downtime kills ROI. io. net's miner-sourced GPUs, including RTX 4090s, tilt toward cost-sensitive training bleed-over to inference, per guides on cheapest GPU servers for AI training 2026. In my view, Render wins for predictable budgets, but io. net disrupts for agile teams chasing every penny in crypto AI infrastructure 2026.

Real-World Benchmarks and Use Cases

Render's 65 million frames rendered translate directly to AI: robotics firms leverage its H200s for vision models, hitting sub-second latencies at fraction-of-cloud costs. io. net powers ML startups deploying H100 clusters for inference on tokenized models, with sources noting seamless migrations. Benchmarks from top GPU providers lists show both undercutting Runpod and others, but Render's history processing millions of jobs edges it for reliability. For Render Network GPU compute, think Hollywood VFX studios adapting to agentic AI; io. net suits indie devs fine-tuning open models on demand.

Render vs io.net Pros

- Render Network: Proven 5,600 nodes with 85-95% utilization for steady AI inference.

- Transparent $1.20-$1.80/hr H100 pricing vs AWS $4.50-$5.50/hr.

- io.net: Up to 90% savings vs centralized clouds.

- <2-min clusters for fast deployment.

- Flexible GPUs like RTX 4090s for bursty inference.

Choosing demands scrutiny of your workload. Sustained inference? Render's depth minimizes variance. Ephemeral scaling? io. net's liquidity shines. Both propel VanEck's $10.2B AI crypto revenue by 2030, but 2026 favors hybrids monitoring real-time liquidity.

Strategic Picks for 2026 Inference

Deploy Render if your AI pipeline craves battle-tested throughput; its expansion via Dispersed. com locks in savings without surprises. Opt for io. net when speed trumps all, especially lighter inference on consumer GPUs. Track RNDR at $1.41 (24h range $1.36-$1.42) as adoption proxy. DePIN's promise? Unlocking GPUs globally, compressing costs further as networks mature. Forward-thinking teams will test both, blending Render's backbone with io. net's edge for optimal io. net AI inference. The race intensifies, but data crowns neither absolute winner; stack your needs against these specs and run the numbers.

No comments yet. Be the first to share your thoughts!